For the past decade, most smart devices have worked the same way: you speak a command or tap a button, your request gets sent to a data center hundreds of miles away, it gets processed by powerful servers, and the result comes back to your device. It's fast enough that you barely notice — until your internet goes down and everything stops working.

On-device AI is changing that equation entirely. In 2026, a growing number of gadgets can think for themselves without ever contacting the cloud. Here's why that matters and what it means for the devices you use every day.

What Is On-Device AI?

On-device AI — sometimes called edge AI — means running artificial intelligence models directly on the hardware in your hand, on your wrist, or in your home. Instead of sending data to a remote server for processing, the device handles everything locally using specialized chips designed for AI workloads.

This isn't entirely new. Smartphones have used basic on-device processing for face recognition and voice wake words for years. What's changed in 2026 is the scale and sophistication. Modern neural processing units can now run complex language models, image recognition, and real-time translation entirely on a phone, laptop, or even a pair of earbuds.

Why the Shift Away From Cloud AI?

Cloud-based AI works well when you have a fast, stable internet connection. But it has three fundamental problems that on-device AI solves.

Latency. Every round trip to the cloud adds delay. For real-time applications — live translation, augmented reality overlays, camera scene detection — even a hundred milliseconds of lag ruins the experience. On-device processing happens in single-digit milliseconds.

Privacy. When your voice commands, photos, health data, and browsing habits travel to remote servers, you're trusting that company to handle your data responsibly. On-device AI keeps sensitive information on your hardware. Your voice never leaves your phone. Your health metrics never leave your wrist. For privacy-conscious users, this is a game-changer.

Reliability. Cloud AI fails when your internet fails. On-device AI works anywhere — on a plane, in a rural area with spotty coverage, during a network outage. Your device remains fully functional regardless of connectivity.

Where On-Device AI Shows Up in 2026

The technology is spreading rapidly across device categories. Here are the areas where you'll notice it most.

Smartphones are leading the charge. Current flagship phones run AI models with billions of parameters directly on-chip. This powers features like real-time photo enhancement, intelligent text suggestions that understand context across apps, live conversation translation, and smart summaries of long documents — all without sending a byte to the cloud.

Laptops and tablets now include dedicated neural processing units alongside traditional CPUs and GPUs. This enables features like real-time video background replacement during calls, intelligent noise cancellation that adapts to your specific environment, and local document search that understands natural language queries.

Wearables are perhaps the most impressive use case. Smartwatches and fitness trackers now run health models locally that can detect irregular heart rhythms, predict blood glucose trends, and identify sleep disorders — processing continuous sensor data without draining the battery by constantly communicating with servers.

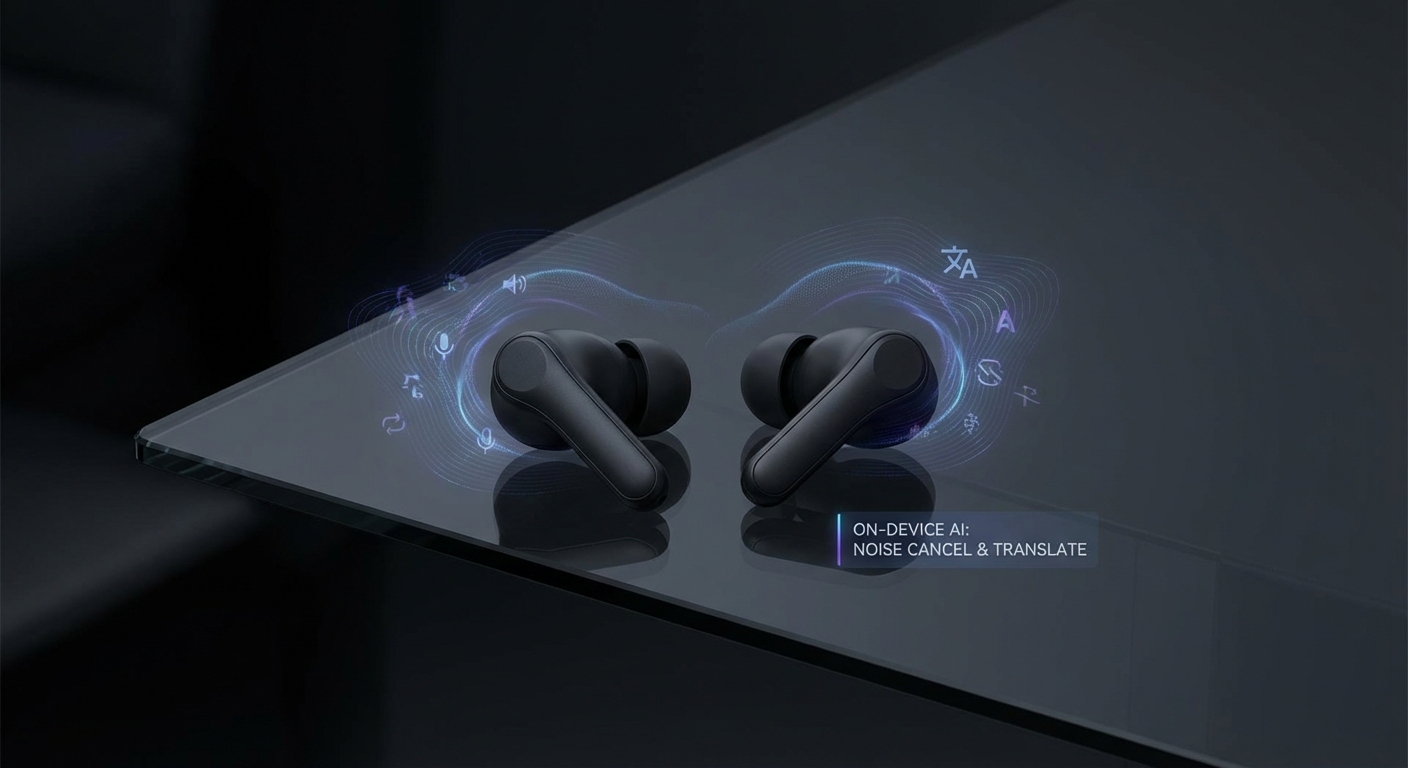

Earbuds and headphones use on-device AI for adaptive noise cancellation that learns your preferences, real-time language translation during conversations, and voice isolation that picks your voice out of a crowded room.

Home devices including cameras, doorbells, and sensors now run person detection, package recognition, and anomaly detection locally. Your security camera can tell the difference between a person and a cat without uploading footage to anyone's server.

The Technical Breakthrough Behind It

What makes this possible is a combination of hardware and software advances working together.

On the hardware side, neural processing units have become dramatically more efficient. Modern chips can perform trillions of AI operations per second while consuming a fraction of a watt — critical for battery-powered devices. These specialized processors handle AI workloads far more efficiently than general-purpose CPUs.

On the software side, model compression techniques have made large AI models dramatically smaller without significant quality loss. Techniques like quantization, pruning, and knowledge distillation shrink models that once required a data center into versions that run on a chip the size of a fingernail.

What This Means for You as a Buyer

When shopping for new devices in 2026, on-device AI capability is quickly becoming one of the most important specifications to evaluate. Here's what to look for.

Check for a dedicated neural processing unit. Devices with specialized AI hardware will outperform those trying to run AI on general-purpose processors. Product specs should mention an NPU, neural engine, or AI accelerator.

Ask what works offline. The true test of on-device AI is what still functions when you disconnect from the internet. If a "smart" feature requires constant cloud connectivity, it's cloud AI with a marketing label, not genuine on-device intelligence.

Evaluate the privacy model. On-device AI should mean your data stays on your device. Read the privacy policy — some manufacturers process data locally but still upload analytics or telemetry. The best implementations keep everything local by default.

Consider the update path. On-device AI models improve over time through software updates. Devices from manufacturers with strong update track records will get smarter throughout their lifespan. A device that ships with good AI today but never gets model updates will fall behind quickly.

The Hybrid Future

On-device AI won't completely replace cloud processing. Some tasks — training new models, processing massive datasets, running the most complex generative AI — still benefit from the raw power of cloud infrastructure.

The emerging model is hybrid: devices handle routine AI tasks locally for speed, privacy, and reliability, while offloading only the most demanding requests to the cloud. You get the best of both worlds — instant, private processing for everyday use and cloud power when you truly need it.

The Bottom Line

On-device AI represents a fundamental shift in how our gadgets work. Instead of being dumb terminals that depend on a remote brain, devices are becoming genuinely intelligent on their own. The result is faster performance, stronger privacy, and technology that works everywhere — not just where you have a Wi-Fi signal.

As you shop for your next phone, laptop, watch, or pair of earbuds, on-device AI capability should be near the top of your checklist. The smartest device is the one that doesn't need to phone home to think.